Parallel Selectivity Estimation for Optimizing Multidimensional Spatial Join Processing on GPUs

|

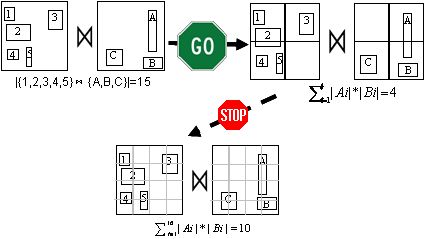

Managing large-scale data is typically memory intensive. The current generation of GPUs has much lower memory capacity than CPUs which is often a limiting factor in processing large data. It is desirable to reduce memory footprint in spatially joining large-scale datasets through query optimization. In this study, we present a technique of selectivity estimation for optimizing spatial join processing on GPUs. By seamlessly integrating multi-dimensional cumulative histograms and the summed-area-table algorithm, our technique can be efficiently realized on GPUs with good portability. Our experiments on spatially joining two sets of Minimum Bounding Boxes (MBBs) derived from real point and polygon data, each with about one million MBBs, have shown that computing the total numbers of MBB pairs at four grid levels took only about 3/4 second. By using the best grid resolution, our technique saves 38.4% memory for the spatial join. When histograms are materialized, it only took a few tens of milliseconds to search for the best grid level for the spatial join. |

Jianting Zhang, Simin You and Le Gruenwald. Parallel Selectivity Estimation for Optimizing Multidimensional Spatial Join Processing on GPUs. Technical Report [PDF]